Spark

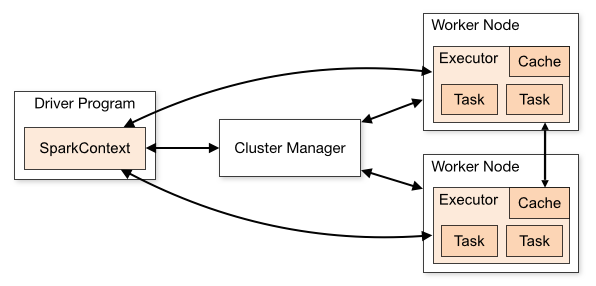

Apache Spark is an open-source processing engine that you can use to process Hadoop data. The following diagram shows the components involved in running Spark jobs. See Spark Cluster Mode Overview for further details on the different components.

- Spark's own standalone cluster manager

- YARN

- Apache Mesos

This section provides documentation about configuring and using Spark with MapR, but it does not duplicate the Apache Spark documentation.

You can also refer to additional documentation available on the Apache Spark Product Page.